Meta has confirmed earlier reports that it has deployed a homegrown data-center inference chip. Fabricated in TSMC’s N5 process, the second-generation Meta Training and Inference Accelerator (MTIA) is part of Meta’s broader AI-infrastructure investment. The new MTIA AI accelerator (NPU) is deployed and running production workloads.

Notables

- Ranking and recommending models are MTIA’s targets. Recommendation models propose content to users, and ranking systems order these suggestions. A video service, for example, could use these to suggest a movie to a customer based on his viewing history and demographics and those of similar people. In Meta’s case, they’re selecting advertisements to show users. More apt ads are better for the viewer, advertiser, and ad network (Meta). The immediate commercial value of accelerating these models justifies Meta’s investment in a custom alternative to off-the-shelf GPUs.

- Performance and efficiency—Meta reports that on actual models the new MTIA delivers three times the throughput of its first-generation chip. This would be more impressive if power didn’t also increase 3.6×. We infer from Meta’s comments that system power, however, decreases because a single host processor can connect to two MTIA v2 chips compared with only a single v1, halving the number of power-hungry hosts.

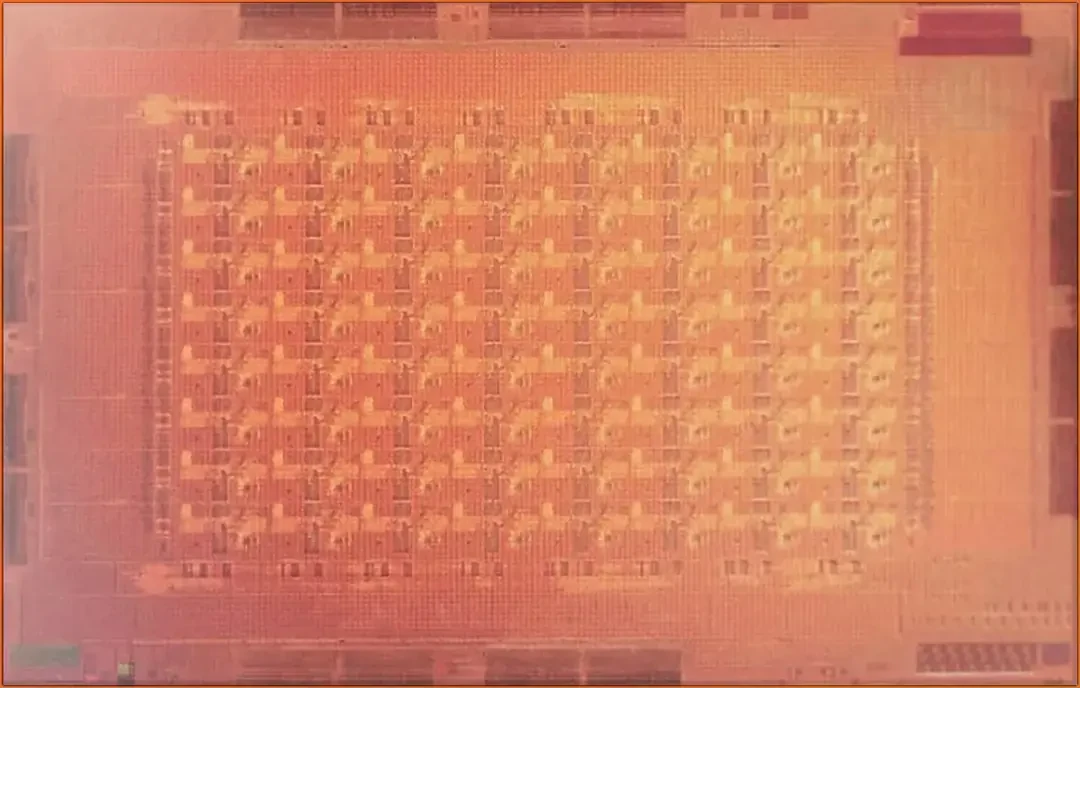

- Architecture—The MTIA comprises an 8×8 array of processing elements, each including two custom RISC-V cores, fixed-function units such as a multiply-accumulate (MAC) array, and a 384 KB local memory. At least one of the CPUs implements RISC-V vector extensions. The chip’s power and PCIe interface could enable Meta to deploy it on add-in cards distributed among ordinary servers. The company, however, builds a chassis holding 12 two-accelerator boards and groups three chassis per rack. A chassis appears to be 2U in Meta’s photos, demonstrating the rack density Meta achieves with 90W chips. It’s likely to conform to the Open Compute Project’s Yosemite V3 spec.

- Software—An NPU project can fail solely because of inadequate software. Meta, however, is an AI-software leader, having developed the deep-learning recommendation model (DLRM), the Llama large-language model, and PyTorch. The company has integrated MTIA with PyTorch and has developed a Triton compiler back end for the NPU.

Competition

GPUs can accelerate recommendation and ranking models, but their memory hierarchy isn’t best for them. Compared with the Nvidia L40S, the new Meta NPU has 2.7× more external DRAM. The MTIA additional has substantial on-chip memory, 256 MB. Meta’s NPU is also lower power, 90W (single-chip TDP) compared with 350W (whole-board maximum) for the L40S. On a like-for-like basis, the power ratio could be 2:1. Raw computing performance accounts for much of the difference between the MTIA and the L40S; for example, the L40S delivers twice the INT8 and FP16 matrix-math TOPS of the MTIA.

For training and other applications, Meta will continue to employ commercial GPUs, such as the AMD MI300 and Nvidia Blackwell. As a leading developer of AI software and models, Meta is likely to deploy relatively more training systems than other companies, which will predominantly focus on inference. Nonetheless, Meta has huge inference workloads and MTIA will displace GPUs and other NPUs.

Bottom Line

Developing inference NPUs in house should yield a chip better adapted to the company’s requirements and less expensive to purchase, owing to Meta’s scale. Marginally increasing ad clicks—and, therefore, revenue—by improving ad selection can add billions to an ad network’s bottom line. Facing equally well-resourced Google and Amazon, Meta had to build its own NPU. The pressure is now greater for independent chip suppliers such as Nvidia and Tenstorrent to deliver comparable value to the next customer tier.