-

Bolt Confounds Skeptics, Tapes Out Zeus GPU

Startup GPU maker Bolt Graphics is on track to deliver development kits to lead customers by the end of the year, having taped out its first chip last month. By the end of 2027, the company expects to begin shipping PCIe cards based on a production version of its Zeus GPU. This schedule represents a… continue reading

-

Matrix Multiplication Comes to x86

The x86 Ecosystem Advisory Group (EAG, better known as AMD and Intel) has defined the AI Compute Extensions (ACE) for the x86 architecture. The group frames these instructions as a palette, a term Intel coined for a specific configuration of its Advanced Matrix Extensions (AMX). Those AI-focused instructions and associated hardware first appeared in Sapphire… continue reading

-

Blowout AMD & Intel Earnings, Google TPUv8, Astera Scorpio-X, RISC-V New, and More: It’s BWR Ep 13.

This episode features an in-depth discussion on the latest developments in data center chips, AI accelerators, interconnect technologies, and RISC-V ecosystem growth. Experts analyze AMD and Intel earnings, Google TPU v8, Astera Labs’s Scorpio-X, and emerging startups, providing insights into market trends, technological innovations, and strategic shifts. continue reading

-

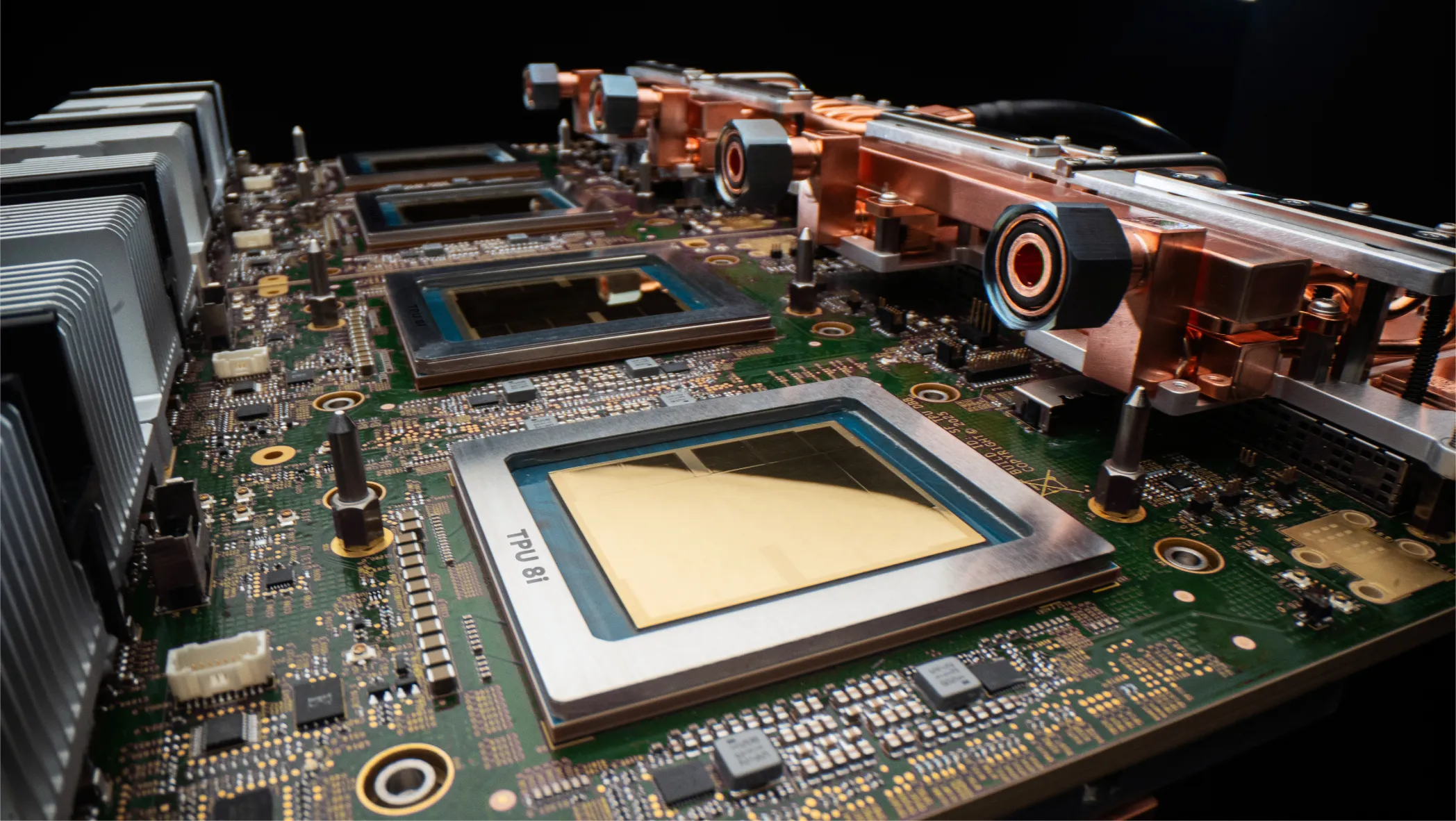

Google TPUv8: Early Specs and Performance Gains

Google has unveiled its eighth-generation AI accelerator, revealing a design similar to past designs but with greater per-socket throughput, faster networking, and new collective engines. Per-chip gains should improve cost per token and performance, particularly as large language models grow well beyond 1 trillion parameters. As with some previous generations, the new accelerator (NPU) has… continue reading

-

Hynix Infusion Helps Semidynamics Diversify into AI Chips

Memory giant SK Hynix has invested in RISC-V supplier Semidynamics, helping the CPU designer expand into chips, boards, and systems for data-center AI inference. The Spanish startup joins Arm and fellow European RISC-V supplier Codasip in moving beyond IP. Semidynamics Evolves from RISC-V IP to AI Systems Semidynamics began as a design services company. It… continue reading

Popular

Sponsored Content and Plugs

- BWR 12: The Memory Episode

- Slash Server Costs Without Hardware or Software Changes

- Ceva Boosts NeuPro-M NPU Throughput and Efficiency

- MEXT Helps IT Leaders Find the Sweet Spot

- Chips Act Backs Chiplets

Other Sites You Might Like

Read More

Amazon AMD Arm auto Broadcom business Cerebras Ceva CPU data center DPU DSP edge AI embedded Epyc FPGA Google GPU Groq Imagination industrial Intel Marvell MCU MediaTek memory Meta Microsoft MLPerf NPU (AI accelerator) Nvidia NXP OpenAI PC process tech Qualcomm RISC-V SambaNova Semidynamics SiFive smartphone SoftBank software Tenstorrent Tesla