Hoary startup SambaNova has closed a $350 million Series E funding round, introduced the SN50 AI accelerator, and signed up SoftBank to deploy the new NPU. Following reports that Intel was considering acquiring the company, which Intel CEO Lip-Bu Tan chairs, the beleaguered giant merely joined the funding round and signed a collaboration agreement.

SambaNova aims to overcome bandwidth and utilization problems that limit GPU performance. The SN50’s technology refinements and the new funding position the company to address interactive and agentic AI applications. Founded in 2017, SambaNova commercializes concepts that a pair of Stanford professors developed. These include its data-flow technology and coarse-grain reconfigurable architecture (CGRA). The CGRA approach borrows from FPGAs. Whereas an advanced FPGA combines configurable logic modules equivalent to roughly 30 gates with a configurable on-chip interconnect, a CGRA device combines larger (coarser) function blocks with a similar interconnect.

SambaNova Architecture Overview

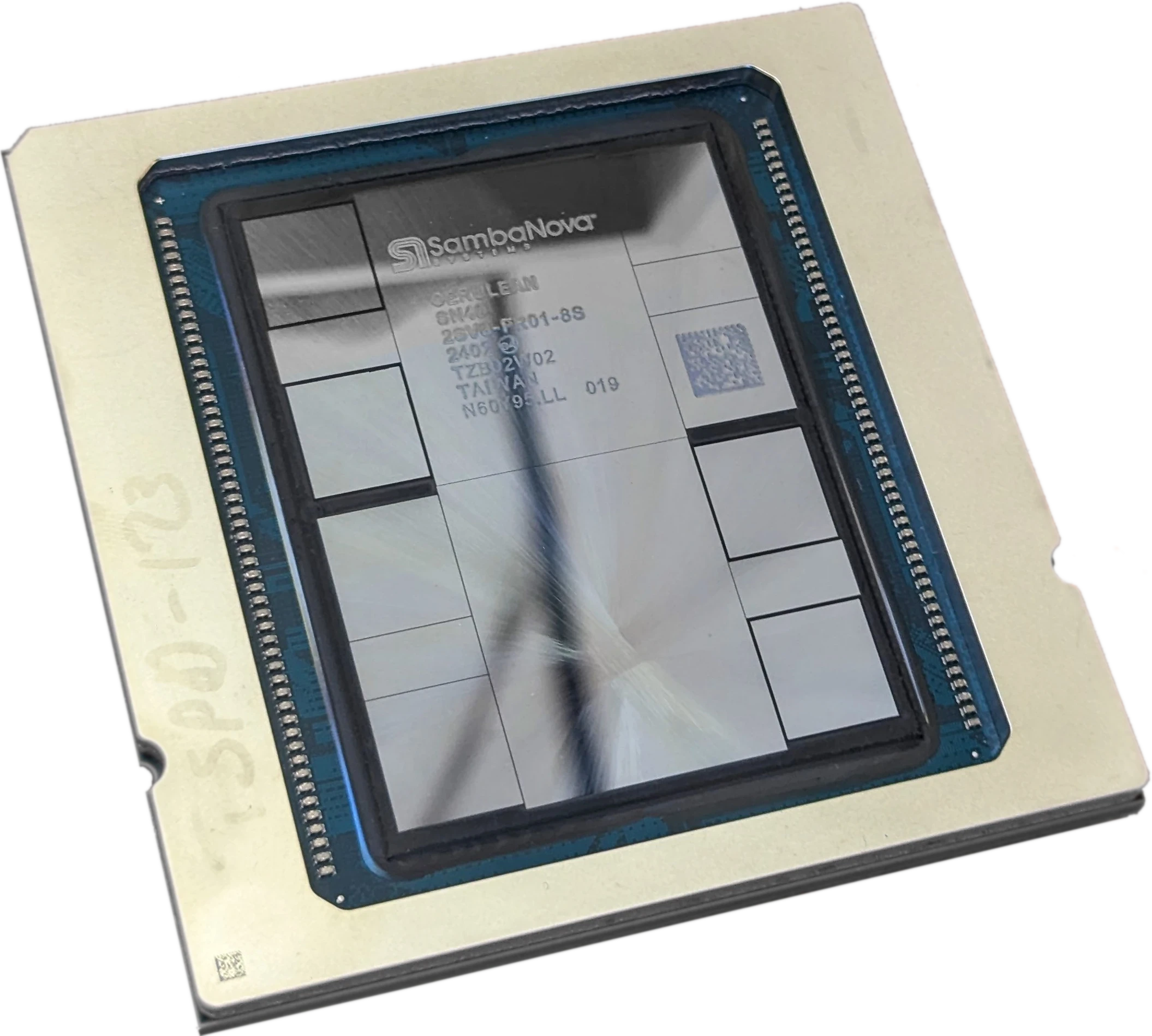

A SambaNova chip tiles computing and memory units. Each computing unit contains multiple parallel pipelines of SIMD vector engines, as Figure 1 shows. These pipelines feed into a banked memory, which provides inputs to downstream computing units. The company’s compiler maps a TensorFlow or other neural-network graph into the chip, configuring the SIMD engines’ operations and the interconnect.

Once the chip is configured to execute a model, data ripples through it. By contrast, a GPU may need to periodically pause computation to load or store intermediate results. The data flow can extend to adjacent chips, enabling a linear speedup as the scale-up domain adds NPUs. Moreover, SambaNova’s approach enables simultaneous processing and communication, further raising computing-unit utilization.

Whereas some competing NPUs that employ on-chip memory to raise utilization do not support external DRAM, SambaNova, since its first-generation product, has supported DRAM. The SN40L added HBM, as Figure 1 shows, and the forthcoming SN50 continues this three-tier approach. The HBM and standard DRAM enable large context caches and quickly swapping among models.

SambaNova SN50 Upgrades

Scheduled to ship by the end of the year, the 5 nm SN50 implements the following changes to the SN40L design:

- 5 × the FLOPS—the SN50 has 2.5 × the throughput on 16-bit (BF16) data and adds eight-bit floating-point (FP8) support at twice this throughput. The chip can handle FP4 data to conserve memory but processes the type in the FP8 data paths, yielding no additional FLOPS.

- Scalability—the SN50 scales up to 256 NPUs compared with 16 for the SN40L. Achieving this scalability requires multiple racks and is enabled through SambaNova’s proprietary Ethernet-based protocol. Communication still overlaps computation.

- 4 × the network throughput—In addition to adding Ethernet to increase scaling, the SN50 quadruples the per-chip network bandwidth.

As it did with previous generations, SambaNova has developed SN50-based systems. The SambaRack integrates 16 SN50 NPUs and requires 20 kW, low enough for air-cooled data centers. The company continues to offer its own cloud-based services but positions them for marketing and developer relations.

SoftBank is the SN50’s lead customer and will employ it in data centers in Japan. Previous SambaNova customers include First Data of Saudi Arabia and other sovereign AI projects. The Intel collaboration includes using Xeons as host processors and comarketing joint solutions through the bigger company’s channels.

Bottom Line

The recent funding enables SambaNova to continue operating, and the company’s $2 billion valuation reflects investor optimism. Sizable as it is, however, it’s only 50% greater than the total funding the company has raised and is half its peak valuation. Now on its fifth-generation chip but with limited customer traction, SambaNova is surely testing investor patience.

Fabricated in a 5 nm process like its predecessor, the SN50 promises a significant upgrade at a lower development cost than a 3 nm implementation. SambaNova expects the SN50 to deliver relatively high per-user token rates (e.g., 600 tokens per second on the GPT-OSS-120B model) but without sacrificing per-chip throughput as much as an Nvidia GPU does at these rates. If the startup can demonstrate this advantage in real systems, it will be better positioned to address interactive applications such as coding and chatting. The ability to hold KV cache and multiple models in local memory should help the SN50 excel at handling large context sizes and multimodel agentic workloads. Thus aligned with this year’s AI application trends, the SN50 is in a better competitive position than previous generations.