-

Neurophos Taps Metamaterials for AI Revolution

Neurophos has raised $110 million to develop exaflop-scale photonic AI chips that use tunable metamaterials to overtop the “power wall” of traditional GPUs. continue reading

-

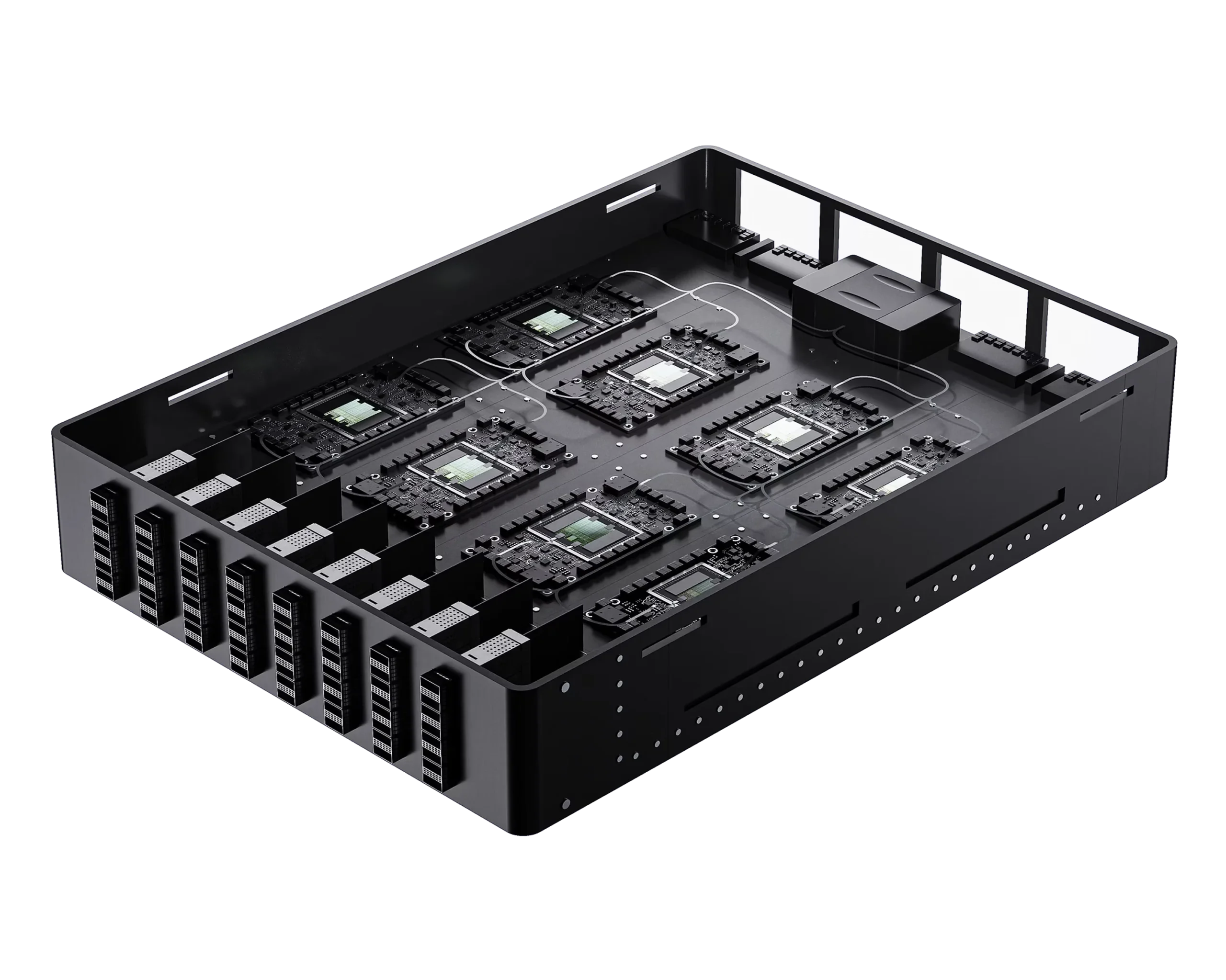

Microsoft Maia 200 Beats Blackwell’s Efficiency

Microsoft is deploying its second-generation AI accelerator. The new Maia 200 NPU targets inference, with raw performance similar to that of the Nvidia Blackwell but requiring much less power. Maia only employs Ethernet, compared with other accelerators that employ a different technology for local (scale-up) interconnect. Microsoft has withheld details; its statements indicate that Maia… continue reading

-

A Prodigy Turns 10: Tachyum Unveils a Universal Processor

The tachyon is a faster-than-light particle, which cannot exist without violating known physics laws. Tachyum is a company promising to perform as well as a CPU, GPU, or NPU, which also shouldn’t be possible. Also threatening its existence, the company sued Cadence in 2022 after finding blocks (IP) licensed from the EDA giant were deficient.… continue reading

-

Byrne-Wheeler Report Discusses Nvidia Context-Memory Device

Joe and Bob discuss Nvidia’s CES disclosures and funding news for AI startups like Etched and Upscale AI, as well as Marvell’s acquisition of XConn. Additionally, they explore OpenAI’s partnership with Cerebras, SiFive gaining access to NVLink, and Global Foundries’ acquisition of Arc from Synopsys. continue reading

-

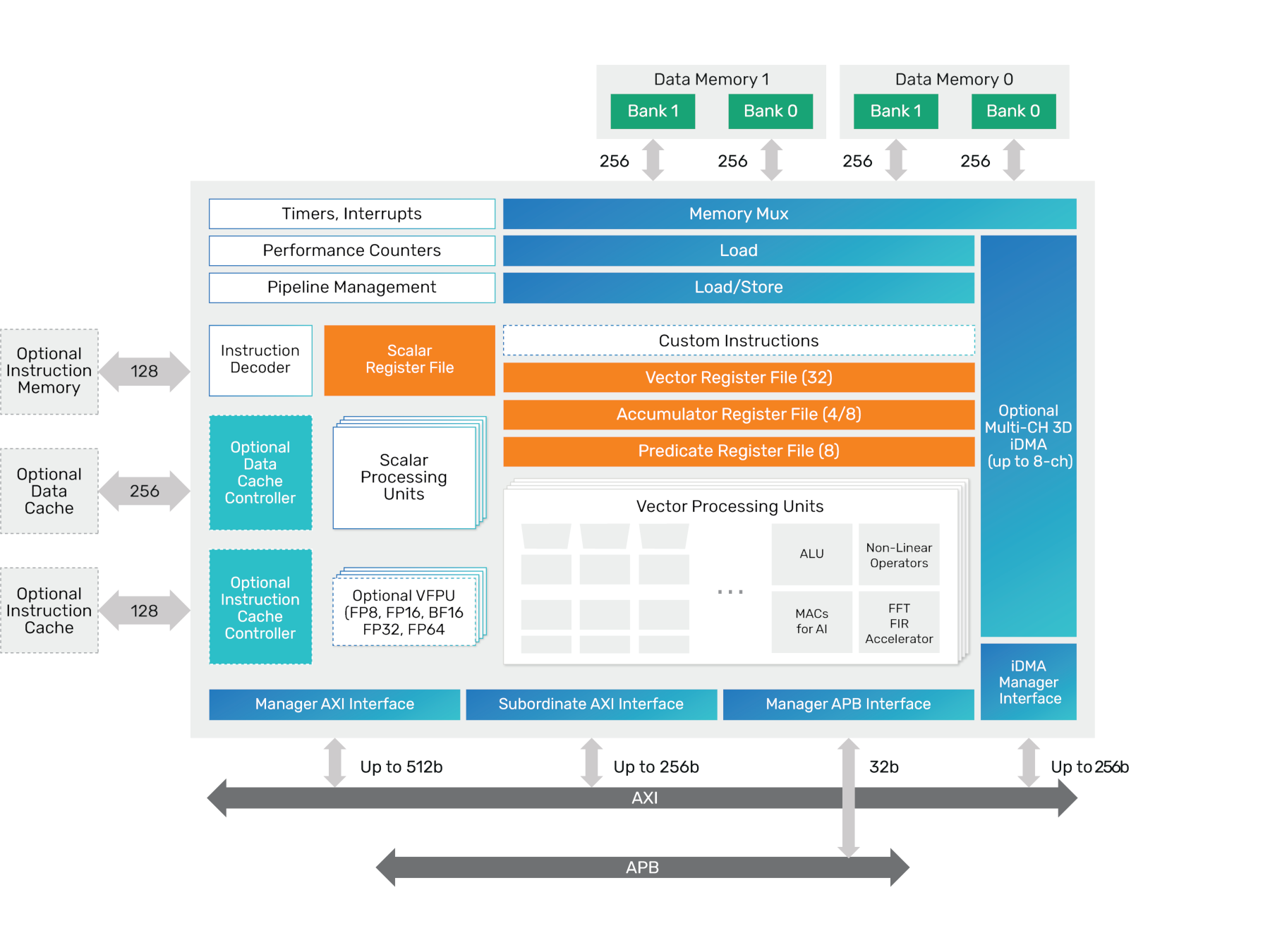

Cadence HiFi IQ Supports Both Immersive Audio and Lightweight LLMs

The Cadence Tensilica HiFi IQ is a high-performance, sixth-generation audio DSP designed to handle classical signal processing and modern AI workloads. Built on the Xtensa LX8 architecture, it offers a leap in AI throughput and energy efficiency, suiting it to chips for high-end automotive infotainment systems. continue reading

-

Byrne-Wheeler Report Discusses Nvidia-Groq Deal

Mike Demler joins Bob and Joe to discuss AI-chip juggernaut Nvidia rapturing key Groq staff and licensing the struggling startup’s innovative NPU technology. continue reading

Popular

Sponsored Content and Plugs

- BWR 12: The Memory Episode

- Slash Server Costs Without Hardware or Software Changes

- Ceva Boosts NeuPro-M NPU Throughput and Efficiency

- MEXT Helps IT Leaders Find the Sweet Spot

- Chips Act Backs Chiplets

Other Sites You Might Like

Read More

Amazon AMD Arm auto Broadcom business Cerebras Ceva consumer CPU data center DPU DSP edge AI embedded FPGA Google GPU Imagination industrial Intel interconnect Marvell MCU MediaTek memory Meta MEXT Microsoft MLPerf NPU (AI accelerator) Nvidia NXP OpenAI PC process tech Qualcomm RISC-V SambaNova SiFive smartphone software Tenstorrent Tesla Upscale