-

InferX Tackles the GPU Cold-Start Problem

Startup InferX’s software quickly and securely loads and unloads AI models on Nvidia GPUs, reducing idle time versus dedicating a GPU to a model. continue reading

-

Cost Cuts Come, Raptor Shines, Arrow Doubts Emerge in 1Q25 Intel Earnings Call

Newly installed Intel CEO Lip Bu Tan (LBT) held his first earnings call, covering the company’s 1Q25 finances and discussing its outlook. The company plans to cut costs by reorganizing and reducing capital expenditures (capex). Product plans are unchanged, but what was omitted from the 1Q25 review revealed more than what was discussed. continue reading

-

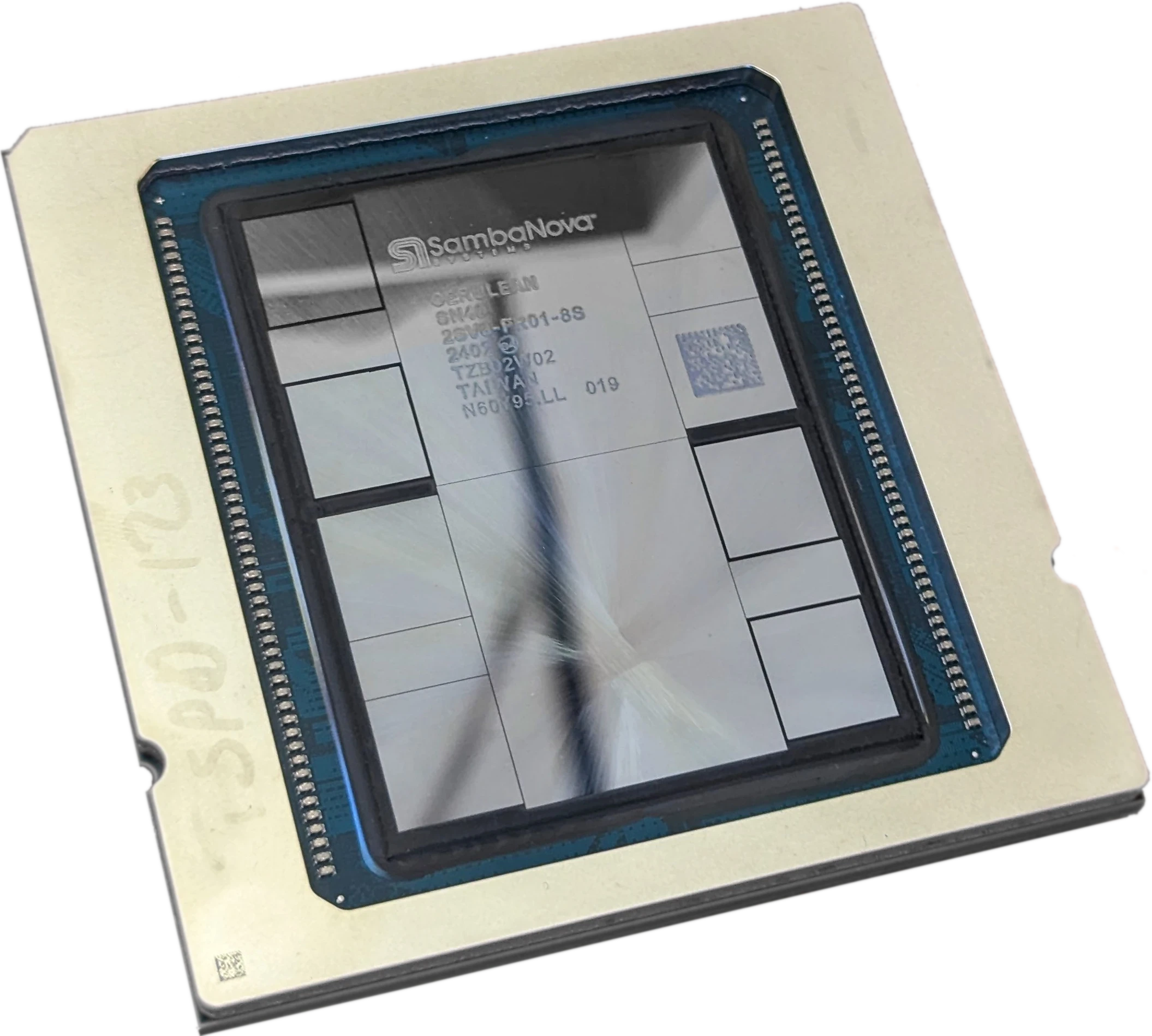

SambaNova Retreats from Training, Bets on Inference Services

SambaNova has reduced its workforce by 15% and refocused on AI inference services to navigate competition with GPU giant Nvidia. Employing CGRA and three memory tiers, its architecture on paper beats GPUs but real-world advantages are unclear. continue reading

-

Huawei’s Ascend 910C and 384-NPU CloudMatrix Fill China’s AI Void

As Huawei ramps up its AI chip efforts with the Ascend 910C NPU and CloudMatrix 384 system, it seeks to fill a void created by the banning of Nvidia GPUs. Having evolved over the past 5+ years, the Ascend 910 is competitive only in AI inference despite its original aim to tackle training. continue reading

Popular

Sponsored Content and Plugs

- BWR 12: The Memory Episode

- Slash Server Costs Without Hardware or Software Changes

- Ceva Boosts NeuPro-M NPU Throughput and Efficiency

- MEXT Helps IT Leaders Find the Sweet Spot

- Chips Act Backs Chiplets

Other Sites You Might Like

Read More

Amazon AMD Arm auto Broadcom business Cerebras Ceva consumer CPU data center DPU DSP edge AI embedded FPGA Google GPU Imagination industrial Intel interconnect Marvell MCU MediaTek memory Meta MEXT Microsoft MLPerf NPU (AI accelerator) Nvidia NXP OpenAI PC process tech Qualcomm RISC-V SambaNova SiFive smartphone software Tenstorrent Tesla Upscale