-

HyperAccel Promises Power Efficiency Gains

HyperAccel seeks to displace Nvidia by delivering a lower-cost AI accelerator. Its Bertha 500 promises to double performance while raising power efficiency 12 × compared with a Hopper-generation Nvidia GPU. Based on the company’s latency-processing unit (LPU) architecture, the startup’s NPU achieves these gains through its 90% compute-unit utilization during inference. Bertha’s efficiency is a continue reading

-

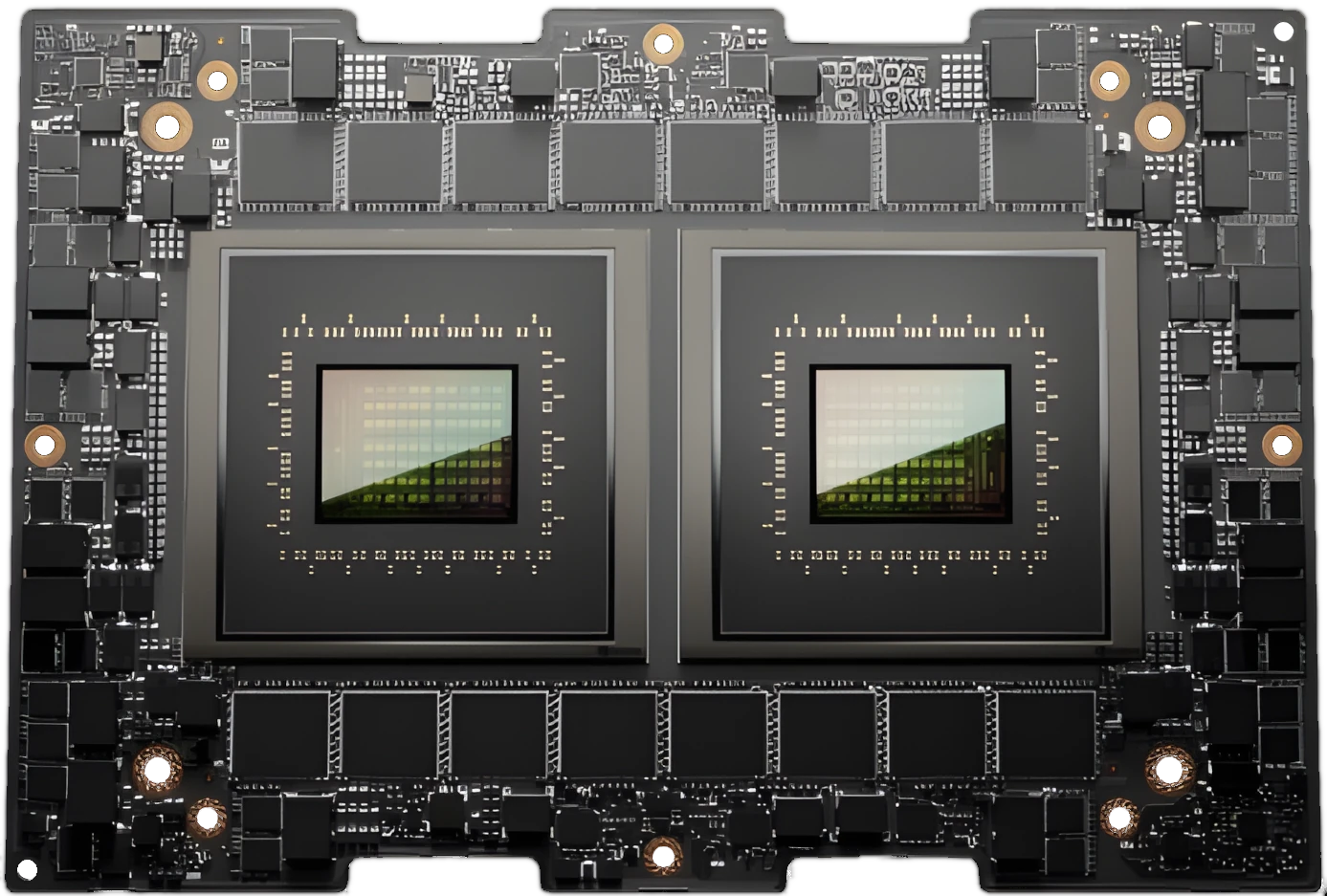

Will Tsavorite’s Composable AI Chiplets Be a GEMM Gem?

Tsavorite debuts its OPU (NPU) with $100M in orders, featuring Cuda support, Arm cores, and modular chiplet scalability. continue reading

-

Cadence ChipStack AI Super Agent Cuts Design Time

Cadence has released the ChipStack AI Super Agent, a productivity-boosting automated workflow for front-end chip design and verification. continue reading

Popular

Sponsored Content and Plugs

- Slash Server Costs Without Hardware or Software Changes

- Ceva Boosts NeuPro-M NPU Throughput and Efficiency

- MEXT Helps IT Leaders Find the Sweet Spot

- Chips Act Backs Chiplets

- Arteris Expands NoC Offerings for AI Accelerators

Other Sites You Might Like

Read More

Amazon AMD Arm auto Broadcom business Cerebras Ceva consumer CPU data center DPU DSP edge AI embedded Epyc FPGA Google GPU Imagination industrial Intel interconnect Marvell MCU MediaTek Meta Microsoft MLPerf NPU (AI accelerator) Nvidia NXP OpenAI PC process tech Qualcomm Renesas RISC-V SambaNova SiFive smartphone SoftBank software Tenstorrent Tesla